Generalizing Learned Policies to Unseen Environments using Meta Strategy Optimization

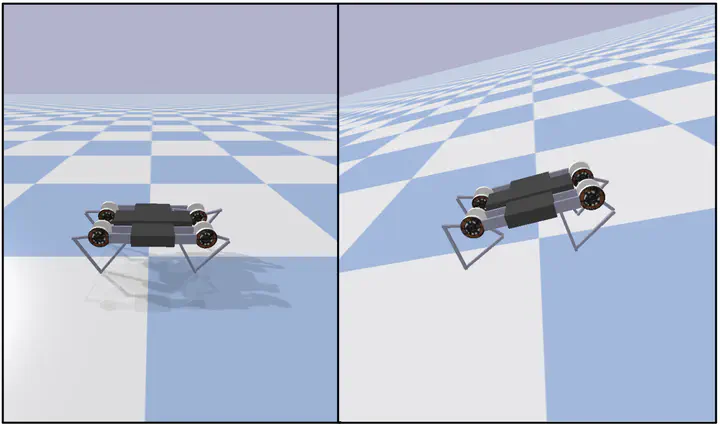

The Ghost Minitaur quadruped in PyBullet sim

The Ghost Minitaur quadruped in PyBullet simAbstract

We attempt to reproduce the claims in [9]. The authors train a quadruped robot in simulation to walk forward with a Deep Reinforcement Learning algorithm called Meta Strategy Optimization (MSO). By conditioning the learned policy on a small latent space, the authors are able to adapt the learned policy to environments that differ significantly from the training environment, beating other generalization methods including Domain Randomization. Using MSO, we are able to achieve a higher reward than DR on the training environment, but are not able to generalize to the test environments as well as DR

Type

Publication

Deep Learning Final Project, 2021